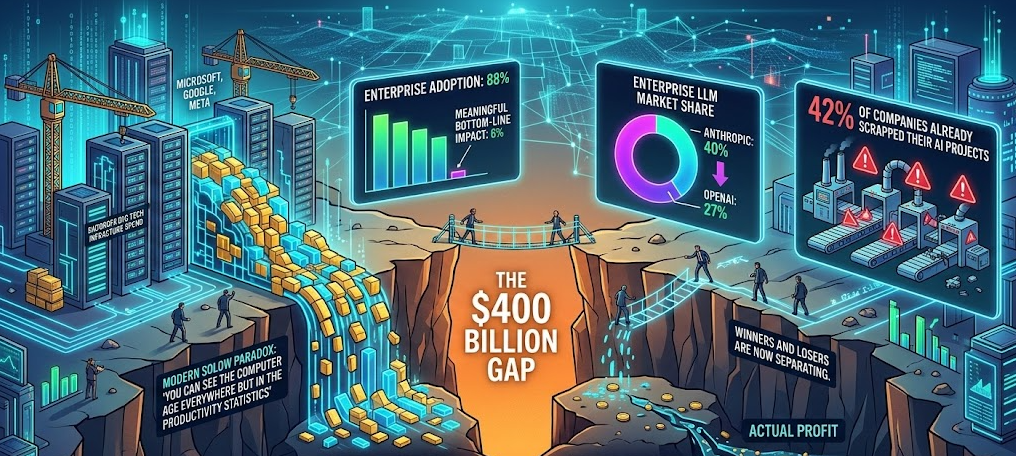

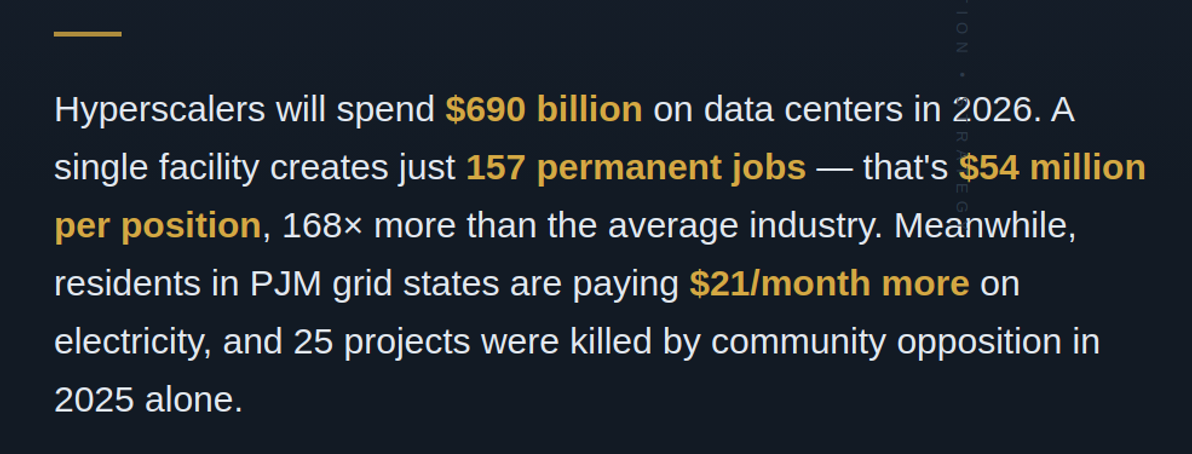

The numbers are staggering in both directions. In 2025, the five largest cloud companies — Amazon, Alphabet, Microsoft, Meta, and Oracle — spent roughly $443 billion on capital expenditure, up 73% from the prior year. Most of it went to data centers built to train and run artificial intelligence. By 2026, that figure is projected to approach $690 billion, with three-quarters earmarked for AI-specific infrastructure. Goldman Sachs estimates hyperscaler capex from 2025 through 2027 will exceed $1.15 trillion — more than double the preceding three years. HSBC pegs global planned AI infrastructure at over $2 trillion.

But something unexpected is happening on the ground. In Indianapolis, hundreds of residents packed a City-County Council chamber in September 2025, and moments before the vote, Google withdrew its 468-acre data center proposal. In Tucson, the city council voted 7–0 to reject a 290-acre Amazon-linked facility. In Caledonia, Wisconsin, 40 of 49 public speakers opposed Microsoft's rezoning bid; the company pulled its plans. Across Georgia, ten municipalities enacted moratoriums. In the first six weeks of 2026, legislators in 30-plus states filed more than 300 new bills targeting data center development. The world's most valuable companies are writing the largest infrastructure checks in a generation — and communities are sending them back.

An AI server rack now draws more power than 10 American homes

The core tension is physical. An AI GPU server consumes 4 to 10 times the electricity of a traditional cloud server. A single Nvidia Blackwell chip runs at 700–1,200 watts; the forthcoming Rubin Ultra rack could demand 600 kilowatts — enough for roughly 200 households. A hyperscale AI data center can draw as much electricity as 100,000 homes. Meta's planned facility in Louisiana would consume more than twice the power of New Orleans.

In aggregate, U.S. data centers used about 183 terawatt-hours in 2024 — over 4% of national electricity. The Electric Power Research Institute projects that share could reach 9–17% by 2030. McKinsey's estimate is 606 TWh by decade's end, nearly tripling current consumption. The International Energy Agency says data centers will account for almost half of all U.S. electricity demand growth through 2030.

Water tells a parallel story. A large data center can consume up to 5 million gallons per day — equivalent to a town of 50,000 people. Northern Virginia's facilities used 2 billion gallons in 2023, a 63% increase from 2019. Texas data centers are projected to consume 49 billion gallons in 2025, potentially ballooning to 399 billion gallons by 2030.

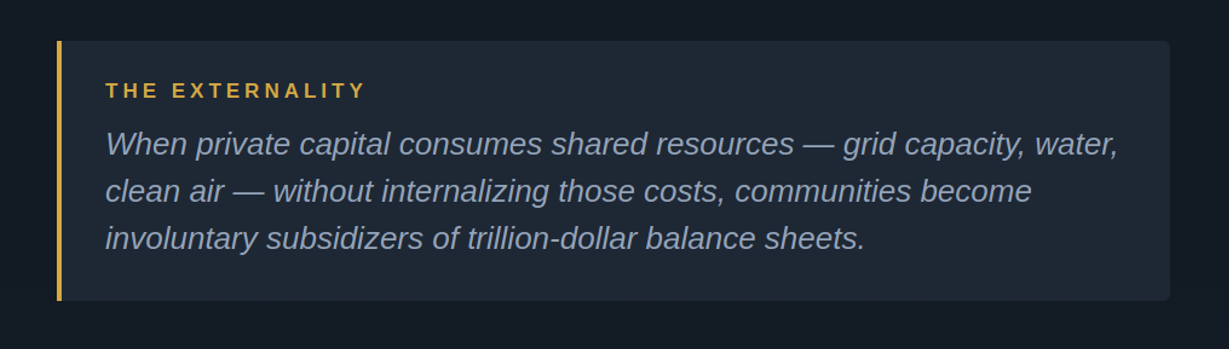

These are textbook negative externalities — costs imposed on third parties who never consented to the transaction. When a data center consumes electricity, it tightens supply across the grid. When it draws water, it competes with residential and agricultural users. When its cooling systems run around the clock, it generates low-frequency noise that disrupts neighboring homes. In Prince William County, Virginia, residents near Amazon facilities documented noise levels regularly exceeding local ordinance limits, describing a persistent hum that "shakes walls and disrupts sleep." In Southaven, Mississippi, Elon Musk's xAI Colossus facility runs 27 methane turbines that residents describe as "jet engines day and night" — prompting the NAACP and Earthjustice to file notice of a Clean Air Act lawsuit in February 2026.

The math on jobs doesn't add up

The externalities might be tolerable if data centers delivered proportional economic benefits. They don't — at least not through traditional metrics. According to a January 2026 Food & Water Watch analysis of Virginia's own economic development data, one permanent data center job costs $54 million in invested capital — 168 times more than the $322,000 average for non–data center jobs. The U.S. Chamber of Commerce found a typical large data center employs 1,688 workers during construction but just 157 permanent staff. Nationwide, as few as 23,000 people held permanent data center jobs in 2024 — a rounding error in a labor market of 160 million. Anthropic announced a $50 billion infrastructure commitment in November 2025 that would create just 800 permanent positions.

This ratio demolishes the traditional economic development calculus. States and localities have long offered tax incentives to attract employers, implicitly trading foregone revenue for jobs and economic multiplier effects. But the incentive structures designed for auto plants and semiconductor fabs produce perverse outcomes when applied to data centers. Virginia's data center tax exemption, originally projected to cost $1.5 million, ballooned to $1.6 billion in foregone revenue in fiscal year 2025 — a 118% increase from the prior year. Georgia's exemptions are projected to reach $2.5 billion in FY2026, with a state audit finding that 70% of subsidized projects would have located there without incentives. Texas saw costs explode from a $130 million projection in early 2023 to $1 billion by January 2025, with cumulative losses projected at $9 billion through 2030.

Why the Standard Economics Playbook Fails at the Grid's Edge

The failures here aren't subtle — they're textbook, and they stack.

Start with the basics. Data centers impose negative externalities — grid strain, water depletion, noise pollution — on parties who never consented to the transaction. Classical economics prescribes Pigouvian taxation to force producers to internalize those costs. Oregon's requirement that data centers "pay for the actual strain they place on the electrical grid" is Pigouvian logic in legislative form.

But externalities are only the first layer. Grid capacity functions as a public good — everyone shares it, and every megawatt a data center claims is unavailable to residential users. The result is a tragedy of the commons: each hyperscaler rationally maximizes its own buildout while collectively degrading the shared resource for 67 million PJM ratepayers. The elevenfold surge in capacity auction prices — $29 to $333 per megawatt-day — is the commons being depleted in real time.

Economists might invoke the Coase theorem here: if property rights are well-defined and transaction costs are low, private bargaining should produce efficient outcomes. A town could negotiate compensation — lower utility bills, community funds, infrastructure upgrades — in exchange for accepting a data center's footprint. In practice, the conditions for Coasean bargaining almost never hold. Information asymmetry is endemic; developers routinely use non-disclosure agreements that prevent communities from learning resource consumption figures until approvals are secured. Transaction costs are enormous — organizing thousands of affected ratepayers against a single corporate counterparty with a $200 billion balance sheet is a coordination problem of the first order. And the most significant externality, grid strain, operates through a market structure that severs the link between cause and cost.

The incentive layer is equally broken. State legislatures designed tax exemptions for manufacturers with high job-to-capital ratios. Data center operators exploited those frameworks to secure billions in foregone revenue while delivering a fraction of expected employment. Georgia's own audit — finding 70% of subsidized projects would have located there anyway — is textbook deadweight loss. The lobbying to maintain these exemptions is pure rent-seeking: resources devoted to capturing transfers rather than creating value. Meanwhile, state legislators capture political credit for headline investment figures while diffusing costs across millions of ratepayers — a classic principal–agent problem where the true cost hides in higher electricity bills rather than a visible budget line.

The community backlash is the democratic correction. The 188 grassroots groups, 238 state bills, and packed council chambers represent what happens when markets fail to price their own consequences and the political system hasn't caught up. By every standard framework, the current allocation of costs and benefits isn't close to efficient.

What an equitable framework actually looks like

The political backlash is producing policy innovation. Virginia's State Corporation Commission created a new rate class in November 2025 requiring large power users to sign 14-year commitments and bear minimum cost obligations — a structural shift toward internalizing grid costs. Oregon passed legislation requiring data centers to "pay for the actual strain they place on the electrical grid." Colorado's SB 26-102 would bar special low rates for data centers and require clean energy sourcing. In Indiana, a legal settlement with utility Indiana Michigan Power created a framework to prevent residential bill increases from data center infrastructure. Denver announced a moratorium in February 2026 to establish environmental guardrails before permitting resumes.

These interventions represent a move toward what economists call full-cost pricing — forcing data centers to internalize the externalities they currently push onto communities. The most defensible framework would combine several elements: eliminating blanket sales tax exemptions in favor of performance-based incentives tied to measurable community benefits; requiring cost-causation principles in utility rate design so that the load growth data centers create is reflected in the rates they pay, not socialized across residential customers; mandating transparent resource disclosure before land-use approvals; and establishing community benefit agreements with binding commitments on noise, water, and local hiring.

The data center industry is not the villain in this story — AI infrastructure is a legitimate national priority with real productivity implications. But $690 billion a year in private capital does not need $2.5 billion a year in public subsidy from Georgia alone. When a single facility creates 157 jobs while consuming as much electricity as 100,000 homes and as much water as a small city, the standard economic development playbook isn't just inadequate — it's the wrong book entirely. Communities that are saying no aren't anti-technology. They're doing the cost-benefit analysis that their state legislatures failed to do, and the numbers aren't close.

Frequently Asked Questions

How many jobs does a data center create? A typical large data center employs roughly 1,500–3,000 construction workers over 18–36 months, but only 30–157 permanent operational staff once built. In Virginia, Food & Water Watch calculated one permanent data center job per $54 million invested — compared to $322,000 per job in other industries. Industry groups claim broader economic multiplier effects of 5–8 indirect jobs per direct position, but these figures are contested.

How much electricity does an AI data center use? A hyperscale AI data center can consume as much electricity as 100,000 homes. AI-optimized server racks draw 40–100+ kilowatts each, compared to 5–15 kW for traditional racks — roughly a 4–10x increase. U.S. data centers consumed 183 TWh in 2024 (4%+ of national electricity), and projections range from 325 to 606 TWh by 2030, potentially reaching 9–17% of all U.S. power.

Do data centers raise electricity bills for nearby residents? Yes. In the PJM grid region (13 states, 67 million people), capacity auction prices increased nearly elevenfold between 2024 and 2027, with data centers responsible for 45% of costs. Residential customers in affected areas have seen monthly bills rise $16–$21. A Carnegie Mellon study projects average U.S. bill increases of 8% by 2030, with northern Virginia potentially exceeding 25%.

How much water do data centers use? A typical data center uses about 300,000 gallons per day — equivalent to 1,000 households. Large AI-focused hyperscale facilities can consume up to 5 million gallons daily. Northern Virginia data centers used 2 billion gallons in 2023. Texas data centers are projected to consume 49 billion gallons in 2025, with some estimates reaching 399 billion gallons by 2030.